Explaining What Artificial Intelligence is Without Melting Your Brain

Artificial intelligence (AI) has been a topic of interest for a long time and there have been countless articles written about them, but they all use language and overly complicated explanations that will make people’s brains ache and leave them even more confused than when they first started.

Also probably the first thing people think of when they hear the words artificial intelligence is robots; most likely big man Arnold Schwarzenegger’s Terminator hulking around saying ‘I’LL BE BACK’ and shooting a massive shotgun or for the millennials reading this, Ultron from the Avengers movies just wreaking havoc. They are not wrong.

So lets start with a quick history lesson.

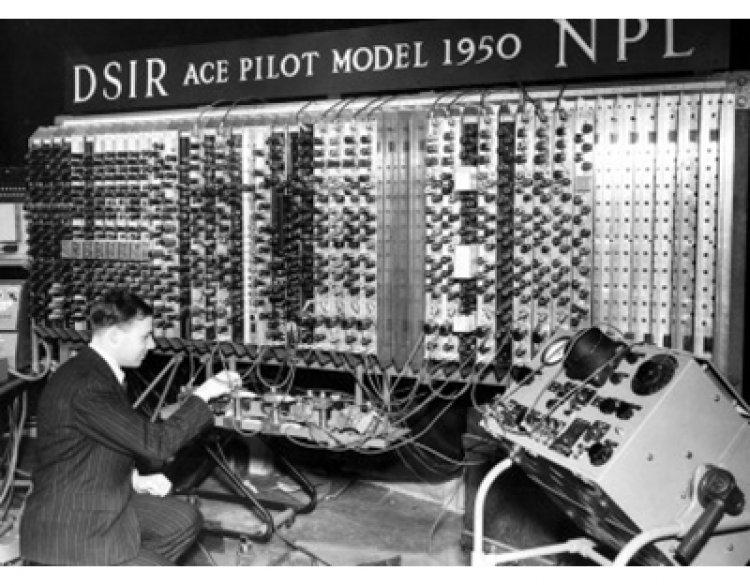

A British mathematician named Alan Turing helped the Allied Forces win World War II, by cracking the Nazi coding machine called Enigma. As if that wasn’t marvelous enough, ten years later he broke the technological world again with one easy question "Can machines think?" in his paper ‘Computing Machinery and Intelligence’ in 1950.

If you haven’t heard of Alan Turing, watch a movie called The Imitation Game starring Dr. Strange himself Benny Cumberbatch.

AI involves using computers to do things that normally need human intelligence. This means creating algorithms (a set of instructions given to a computer) to categorize, analyze, and derive predictions from data. It also involves acting on data, learning from new data, and improving over time. Just like a tiny human baby growing into a (sometimes) smarter human adult. And like humans, AI is not perfect. Yet.

Theoretically, AI should be able to perform tasks intelligently, think reasonably and humanely, and act without explicit instructions.

AI is all over our personal and professional lives:

- Task automation: repetitive back-office tasks such as clerical work, invoicing, and management reporting can be automated to save time and improve accuracy. Factory and warehouse workers can also be automated using AI-powered robots.

- Customer support: remember the online text chat you had with your bank’s customer support? That may have been a chat-bot instead of an actual human.

- Social media: Facebook uses AI to recognize faces. When you post photos on Facebook, it puts a box around the faces in the photo and suggests friends’ names to tag.

- Self-driving cars: Onboard cameras and computers identify objects and people on the road, follow traffic signs, and drive the car. Early models are already safer than human drivers.

To date, even the best AI can't compete with the human brain in a way. Some AIs are supposed to mimic the human brain, but today's AIs are only suitable for a relatively narrow range of tasks. AI can apply tremendous analytical power to a narrow set of data and methods. The brain, on the other hand, applies average analytical power to a much wider range of data and methods.

Put simply, humans can apply their brains to anything, while AI specializes in certain things.

AI can be used in several different ways that most people don’t even realize. There are three main ways in which AI is useful: machine learning, deep learning, and neural networks.

Machine learning algorithms identify patterns and/or predict outcomes. Many companies use vast data sets of customers, business processes, or finances. Human analysts have limited time and brain capacity to process and analyze this data. Human analysts have limited time and brainpower to process and analyze this data. Therefore, machine learning can be used to predict outcomes given input data, find patterns in large data sets that human eyes sometimes miss and of course, the most important part, do a lot more in less time so goodbye grunt work.

Netflix applies machine learning to your viewing history to personalize the TV show and movie recommendations for you to watch. Netflix also seduces you by analyzing what you and people with similar taste have watched before and automatically creates personalized thumbnails and graphics for movies and TV shows that you would normally skip over. All to ensure that you stay glued to the screen while your brain melts.

A neural network tries to copy the human brain’s approach to analyzing data. They can recognize, categorize and analyze diverse data, deal with many variables, and identify patterns that are too complex for human brains to see.

Deep learning is a subset of machine learning. When applied to neural networks, networks can learn from unstructured data (data that is not classified or tagged) without human supervision. This is great for analyzing "big data" collected by an organization. These large datasets include data in various forms such as text, images, video, and audio. Neural networks are often combined with machine learning, deep learning, and computer vision (teaching computers to draw meaning from images). IT professionals refer to this as ‘deep neural networks,’ which is basically a neural network with more than 2 layers. More layers = more analytical power.

Deep neural networks can be taught to identify and categorize objects. A cool use is a facial recognition — identifying unique faces in photos and videos. Neural networks also learn over time. For instance, they get better at categorizing objects and identifying faces as they are fed more data.

A university in Eastern China has implemented an AI-powered attendance system, with cameras that constantly observe students in class. It scans faces to check that the student has shown up to class. It also analyzes facial expressions in real-time, and can actually judge whether students are paying attention. It can apparently identify people who are sleeping or playing on their phones. Aren’t you ridiculously glad you’re not a student in China?

AI has become a constant in our lives without us really noticing it, it is also one of the most debated topics in the technological world with one side showing how beneficial it is and how the world is literally powered by it sometimes, and the other side urging people to think of the dangers that AI would pose if we rely on it too much or if it goes badly wrong. One thing is indisputable though; AI is only going to improve drastically. Let’s just hope that they don’t turn into Decepticons.